7 AI Breakthroughs from 2025 You Missed (and Why They Matter)

2025 was loud. Headlines shouted about chatbots, lawsuits, and who trained what on whose data. Meanwhile, the real AI breakthroughs 2025 slipped in through the side door, put on a name tag, and started doing actual work.

These weren’t magic tricks. They were the kind of improvements that show up in your support inbox, your design workflow, and yes, sometimes in a clinic, helping a nurse decide who needs attention first.

Here are seven updates you might’ve missed. Each one comes with a plain-English explanation, why it matters, and one simple takeaway you can use this week.

The big shift in AI breakthroughs 2025, AI learned to see, hear, and act

For years, “AI” meant typing prompts into a chat box. In 2025, that stopped being the default.

Now the common setup is an AI that can read a doc, look at a screenshot, listen to a call, and then do something with the result. Not “generate a paragraph,” but “open the ticket, update the CRM field, and draft the reply.”

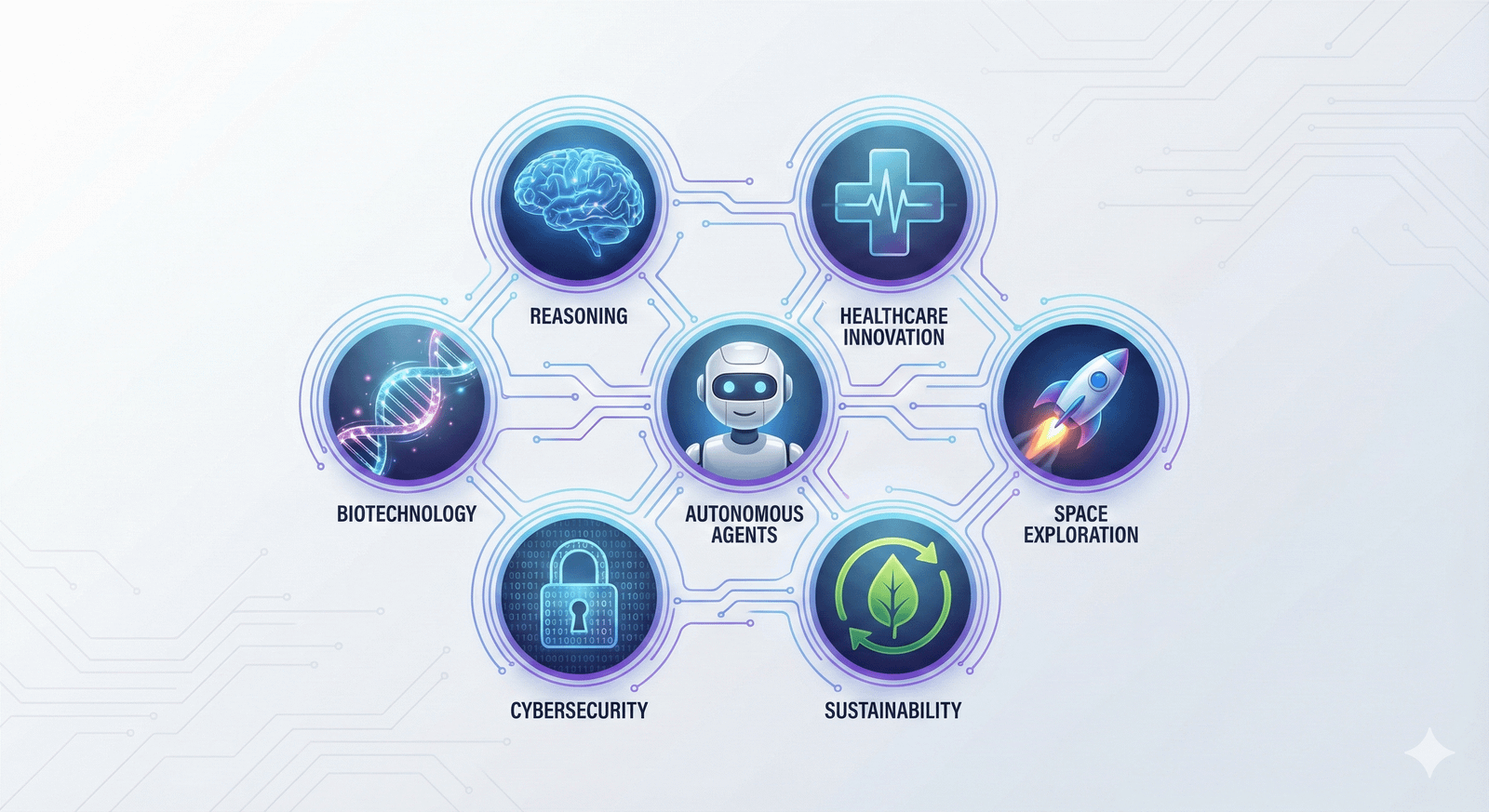

This is the big practical shift behind many AI breakthroughs 2025: less chat, more coordination across media and tools. Google’s year-end recap of research points to the same themes, agents, reasoning, and science moving faster (Google 2025 recap: Research breakthroughs of the year).

Multimodal AI got practical, one model now handles text, voice, images, video, and code

“Multimodal” sounds like a word invented to win a grant. It’s simpler than that: one AI can work with more than one type of input.

Before, you’d use one tool for text, another for images, another for audio, then copy-paste your way into a mess. In 2025, it started to feel normal to toss everything into one place and get one coherent answer.

Everyday examples that became much less painful:

- Upload a messy chart and ask, “What’s the trend, and what should I test next?”

- Talk out loud for 45 seconds and get a usable blog outline (then ask it to rewrite in your brand voice).

- Share a screenshot of a broken settings page and get step-by-step troubleshooting.

- Drop in a product demo video and ask for three ad angles, five hooks, and a landing-page draft.

For creators and marketers, this mattered because production stopped being a relay race. Fewer tools, fewer handoffs, fewer “wait, which version is the final?” moments. Some of the broader “multimodal is the story of 2025” coverage captured that shift well, even if the best proof is your own workflow (Next-Gen AI Models: Why Multimodal Intelligence Is the Real Breakthrough of 2025).

Takeaway: Pick one “mixed input” task (like chart + notes), and make it your default AI test.

Autonomous AI agents moved from demos to real work, they run tasks end-to-end

If multimodal AI is “it understands,” agentic AI is “it does.”

An AI agent is software that takes a goal, breaks it into steps, and completes those steps across tools. You don’t ask it to write an email. You ask it to “resolve these 30 low-priority tickets,” and it works through them, with rules.

In 2025, agents went from flashy demos to real workflows in support, ops, and sales:

- Resetting passwords and verifying identity steps

- Triaging tickets (tagging, routing, drafting replies)

- Updating CRM records after calls

- Monitoring alerts and opening incidents with context

- Scheduling, follow-ups, and status updates

- Basic procurement tasks (like creating a purchase request)

Business-focused write-ups got more honest this year, separating “agent hype” from what teams actually shipped (AI Agents in 2025: Expectations vs. Reality). And if you want the public-interest view (benefits plus risks, written like a human), this overview is worth your time (AI agents arrived in 2025 – here’s what happened and the challenges ahead in 2026).

A quick caution list that kept smart teams out of trouble:

- Approvals for money movement, user access, or external sends

- Logs you can audit (who did what, when, and why)

- Limited access (least privilege, short-lived tokens)

- Human check for high-risk actions (refunds, legal, patient info)

Takeaway: Let an agent handle low-risk tasks first, and treat permissions like loaded tools.

Medicine and health got weirdly better, AI found signals doctors often miss

The sci-fi version of health AI is a robot doctor with perfect bedside manners. The real 2025 version was quieter and more useful: AI spotted patterns that are easy to miss, and it did it fast.

This matters because speed changes outcomes. It also changes access, especially in places without fancy equipment or specialist time. For the broader context of where health and science AI went in 2025, Google Research’s own recap shows how much effort is going into discovery and clinical support (Google Research 2025: Bolder breakthroughs, bigger impact).

Still needed (and still non-negotiable): clinical validation, privacy protections, and bias checks. Helpful tools can still cause harm if they’re sloppy.

A 10-second EKG could flag a hard-to-spot heart problem in seconds

Here’s a breakthrough with real “this helps people this week” energy.

A standard EKG is quick and common. The tricky part is that some heart problems don’t show up clearly to the human eye, especially conditions that are under-recognized or look like other issues.

In December 2025, reporting highlighted AI that can detect signs of coronary microvascular dysfunction from standard EKGs, using a short reading and producing results quickly (AI enables rapid detection of coronary microvascular dysfunction from standard EKGs).

Why that’s a big deal:

- Faster triage, so the right people get attention sooner

- Fewer missed cases that might otherwise bounce between visits

- More support for clinics that don’t have advanced imaging on hand

What it doesn’t do: it doesn’t replace diagnosis. It’s a signal booster, not a final verdict.

If you want another real-world angle on AI reading heart signals, UC Davis Health also covered an AI model improving heart attack detection, which shows the same theme, pattern-finding at speed (New study finds AI model improves heart attack detection).

Takeaway: In health AI, the win is often “faster and earlier,” not “fully automated.”

AI started mapping the gut-brain link to find “brain foods” faster

If your feed served you “one weird food for focus,” you’ve met the problem. Nutrition science is slow, bodies vary a lot, and humans love a shortcut.

In 2025, more research teams used AI models to simulate and sort through gut-brain interactions. In plain terms, they try to predict how nutrients might affect brain health through the gut, then shortlist what’s worth testing in real studies.

Think of it like this: instead of tasting every soup in the world, you ask an assistant to read every recipe, flag likely winners, and tell you which ten to cook.

You’ll often see candidates like citicoline discussed in “brain health” circles, but the key shift is the pipeline. AI helps narrow options faster than trial-and-error.

Why it matters for brands and consumers:

- Shorter research cycles for new formulations

- More targeted hypotheses (less random “add mushrooms” energy)

- Better odds that products are based on something testable

The guardrail: AI can suggest what to study, but it can’t replace human studies. Biology still has a vote.

Takeaway: Treat “AI suggested this nutrient” as a research lead, not a health promise.

New tools changed how we build things, from sketches to chips

A lot of AI breakthroughs 2025 weren’t about words at all. They were about making real stuff, faster.

This showed up in maker workflows, hardware startups, factories, and product teams that finally got tired of waiting three weeks for a prototype change.

A quick sketch can become a usable 3D CAD model, faster prototyping for everyone

CAD can feel like doing geometry homework with a mouse. It’s powerful, but it’s not friendly.

In 2025, sketch-to-model workflows improved. You draw a rough shape (on a tablet, in a whiteboard app, even on paper with a photo), and AI helps infer the geometry into a starting 3D model.

The practical impact is simple:

- Less time stuck “getting the first model right”

- More time testing fit, grip, assembly, and airflow

- Easier handoff to 3D printing or basic machining

This doesn’t remove the need for skill. It changes where skill matters. Designers spend more time making choices and less time pushing points around.

One caution that keeps teams sane: always verify measurements, material limits, and safety constraints. A model that looks right can still be wrong.

Takeaway: Use sketch-to-3D to get to version one fast, then switch to careful checks.

AI got scary good at finding chip defects without breaking the chip

Modern electronics depend on tiny components behaving perfectly at scale. That’s hard when supply chains stretch, processes drift, and defects hide like they’re playing stealth mode.

A quiet manufacturing win in 2025 was better non-destructive inspection. Using imaging methods (like X-ray style scans) plus machine learning, teams can spot subtle defects earlier without destroying the part.

Why that matters beyond the factory:

- Less waste, better yields, fewer production surprises

- More reliable devices (phones, cars, medical tools)

- Fewer delays when a bad batch would’ve caused a scramble

You may not see this breakthrough on a billboard, but you’ll feel it when products ship on time and fail less.

If you want the macro view on how fast AI adoption is moving (and how it’s measured), Stanford’s yearly report is a solid grounding point (The 2025 AI Index Report).

Takeaway: The best AI wins are sometimes invisible, until the outage never happens.

The “thinking” upgrade, AI started taking extra steps before it answers

One of the most useful changes in 2025 was also the least flashy: some models got better at not blurting.

Instead of racing to the first plausible answer, reasoning-focused systems spend more compute on planning and checking. For users, this feels like fewer “confident wrong” replies on tricky tasks.

It’s also why agents got more capable. Better planning makes tool use safer and multi-step tasks less chaotic.

If you want a high-level, no-nonsense overview of where LLMs stood in 2025 (progress plus real problems), this summary is widely shared for a reason (The State Of LLMs 2025: Progress, Problems, and Predictions).

Reasoning-first models improved planning, multi-step problem solving, and tool use

You saw the difference when tasks had dependencies or trade-offs, like:

- Writing a project plan that lists steps, owners, and blockers

- Debugging code with a checklist and targeted tests

- Comparing tools with clear pros, cons, and constraints

- Running a research task with sources, summaries, and next steps

The “tool use” part matters a lot. A reasoning-first model can decide when to search, when to calculate, when to ask a clarifying question, and when to stop.

Watch out for one thing: reasoning doesn’t equal truth. A model can still make up details, or select weak sources, or miss context. For anything important, verify key facts and keep guardrails around actions.

If you like keeping up with what practitioners say mattered most this year, this end-of-2025 roundup hits many of the same themes, agents, reasoning, and real deployment (issue 333).

Takeaway: Ask for a plan with checks, not just an answer, then verify the risky parts.

Conclusion

The sneakiest AI breakthroughs 2025 weren’t loud. They were useful: multimodal models that handle text, voice, images, video, and code; agents that complete tasks end-to-end; health tools that catch hard-to-spot signals; build tools that turn sketches into prototypes; inspection AI that finds defects early; and reasoning upgrades that make multi-step work less messy.

Pick one breakthrough to test this week (a multimodal workflow, a small agent, or a sketch-to-model tool). Then pick one safety habit to keep, like tight permissions, clean logs, and a human review step for anything high-risk. Progress is fun, control is smarter.

FAQ Section

What is multimodal AI and why is it important in 2025?

Multimodal AI in 2025 refers to models capable of processing and understanding multiple data types like text, voice, images, video, and code simultaneously. This is crucial for creating more human-like interactions and comprehensive AI solutions.

How do AI agents from 2025 complete tasks end-to-end?

AI agents in 2025 are designed with advanced reasoning and planning capabilities, allowing them to break down complex goals into sub-tasks, execute them sequentially, and learn from feedback to complete entire workflows without constant human intervention.

What are the key safety habits recommended for implementing new AI technologies?

Essential AI safety habits include establishing tight permissions for AI access, maintaining clean and auditable logs of AI operations, and incorporating a human review step for any high-risk AI-driven decisions or outputs to ensure control and ethical deployment.

Can AI truly turn sketches into prototypes by 2025?

Yes, sketch-to-model AI tools from 2025 have advanced significantly, enabling users to convert rough hand-drawn sketches or simple visual inputs directly into functional digital prototypes or 3D models, accelerating design and development workflows.

Leave a Reply